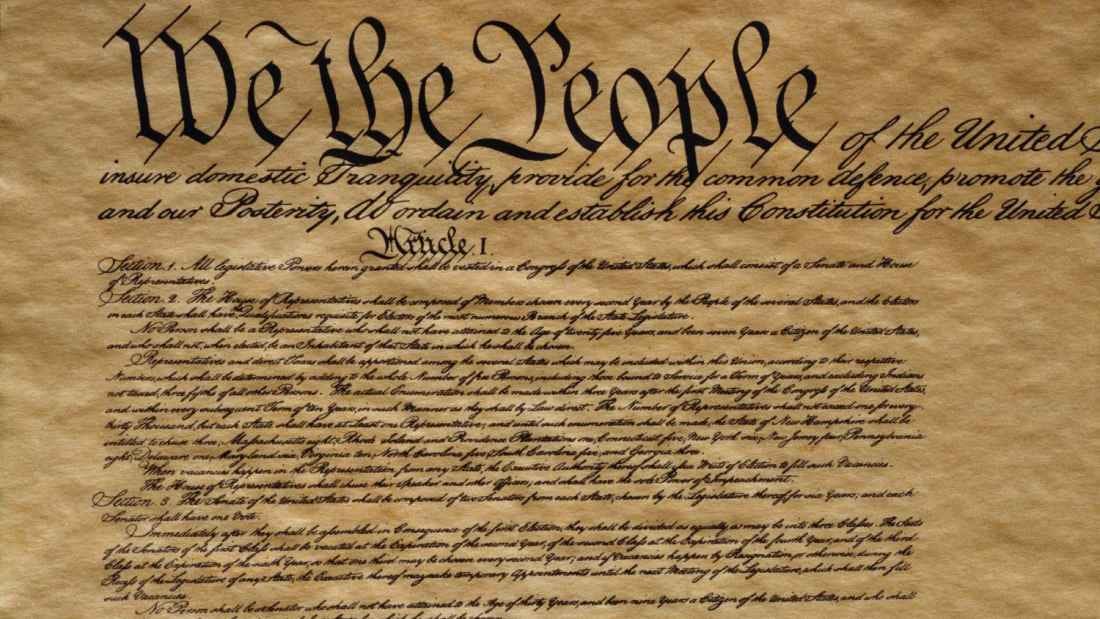

The US Constitution was written by AI, apparently

An AI detector thinks the authors of the United States Constitution had a little help from ChatGPT

AI is everywhere, and it’s becoming harder and harder to ascertain whether something has been created by a human or through the power of artificial intelligence. But did you know the US Constitution was also written by AI in 1787?

Now, that’s obviously not true. But someone put the United States Consitution through an AI detector, and the results were interesting, to say the least.

According to the tool, the AI detector believes there was a 92.26% chance that the Constitution was generated using AI like ChatGPT. And if that’s the case, everything we knew is a lie.

➡️ The Shortcut Skinny: AI detector fail

😲 An AI detector has made a controversial claim

🤣 Apparently, the US Constitution was created by AI

👎 It ultimately shows that AI detectors don’t work

🤖 And more safeguards need to be put in place

But of course that’s not true – so take off your tinfoil hat for a second. What it does prove is that AI detectors are woefully lacking in their ability to truly determine whether a piece of text has been generated by robots. And that’s a real concern.

AI is advancing at a rapid pace, but when it comes to safeguards to protect people from misinformation or prevent bad actors from submitting work that isn’t theirs, we’re basically living in the Wild West right now.

If further evidence was needed, a German artist recently tricked the Sony World Photography Awards 2023 judges with an AI-generated image. He won the open creative category but refused the award. Instead, he hopes his victory will open up a discussion about the impact of AI moving forward.

And a conversation definitely needs to happen. AI not only helps people answer questions, improve their work, create ideas, and even make stunning pieces of art, but it also threatens to make certain jobs (like mine) obsolete. It’s also a breeding ground for unethical practices, such as cheating, manipulation, dishonesty and more.

Not everyone is using AI in this way, of course. People are using AI in all sorts of ingenious ways, and the appeal is immediately obvious. Just like we’ve grown used to telling Alexa to set a timer for 10 minutes while cooking or taking effortless photos with our smartphones, humans love things that make our lives more convenient.

But even though the majority of people will likely use AI to streamline certain aspects of their life or improve their workflow, more steps need to be taken to ensure it’s easier to detect when something truly is AI. We can’t rely on people to be transparent – unless they get caught, that is (cough, CNET).

Tech leaders, including Elon Musk, have called for a halt to AI for at least six months, citing “profound risks to society and humanity.” An open letter was published by the Future of Life Institute, a nonprofit organization, and says:

“Contemporary AI systems are now becoming human-competitive at general tasks,[3] and we must ask ourselves: Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones?

“Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization? Such decisions must not be delegated to unelected tech leaders. Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable.”

Some countries have already taken action against the threat of AI. Italy has banned ChatGPT until more research has been carried out, but it appears other countries are less concerned. Personally, I’d like to see more being done to regulate AI and at least systems in place that can categorically prove whether something has been created by a human or a robot.