Everyone's about to become a CEO. Nvidia says your GPU and AI are why

The people building AI's engine gathered at HumanX in San Francisco this week. Here's what they actually said

Picture this: you wake up tomorrow, and you’re the CEO of your own company. You didn’t hire anyone. You didn’t write a single job posting. But you have a full staff of AIs handling your emails, researching your decisions, managing your schedule, and solving problems you haven’t even thought to ask yet.

That’s not a pitch for a new app. That’s what Bryan Catanzaro, Nvidia’s VP of Applied Deep Learning Research, said is coming – and probably sooner than you think.

“When everyone becomes the CEO of their own company, full of AIs that are solving problems for them,” Catanzaro told a standing-room-only audience at the HumanX conference, “that’s a new dimension of intelligence.”

If you own an Nvidia GPU – whether you bought it to play games or to run AI locally – you already have a small piece of the hardware making this possible. And if the executives at the San Francisco AI event are to be believed, what a single chip can do is about to get a lot bigger.

The chip inside your PC is at the center of an intelligence arms race

Nvidia isn’t just a gaming company anymore – most readers of The Shortcut already know that from our past Substack posts. But what’s less understood is why AI keeps demanding more compute, and why that demand shows no signs of slowing down.

Catanzaro laid it out in unusually clear terms. He described four distinct “scaling laws” – basically four separate reasons why more computing power keeps translating directly into smarter AI.

The first is pre-training: feeding AI models more data, on bigger systems, still makes them meaningfully smarter.

The second is post-training: letting models practice on harder, more diverse problems sharpens their thinking.

The third is what happens at the moment you actually use AI – the more time a model gets to “think” before answering, the harder the questions it can solve.

The fourth is the CEO scenario: agents, meaning AIs that work autonomously on your behalf, create a whole new category of demand for processing power.

“We continue to see that compute and intelligence are the same thing,” Catanzaro said on stage at HumanX.

For gamers, this framing might feel familiar. More VRAM, faster memory bandwidth, higher shader counts – these are the specs that determine whether your GPU can run the next big game. The same logic goes with running bigger and better AIs.

Efficiency is the new benchmark

There’s a catch, though. You can’t just keep throwing hardware at the problem forever.

Catanzaro was candid about the constraints the industry is running up against. The world can only build so many chips, source so much power, and construct so many data centers at once. So the conversation has started to shift from raw power to efficiency – doing more with what’s already there.

“As we figure out how to do that,” he said, “we’re gonna continue to drive intelligence forward.”

Gamers will recognize this instinct immediately. The hunt for better performance-per-watt has defined GPU generations for years. The same ethos – squeezing more out of every joule – is now the central engineering challenge of the AI era.

Outside of Nvidia, Google recently introduced TurboQuant, a breakthrough compression algorithm that reduces key-value cache memory usage by up to 6x – with zero accuracy loss and no retraining required. It’s the kind of efficiency gain that changes the math on what it costs to run AI at scale, and it fits neatly into the same story Catanzaro was telling on stage: intelligence and efficiency are increasingly the same thing.

‘We’re always on the brink of disaster’ – in a good way

Here’s where it gets interesting. Designing chips for AI is, by Catanzaro’s own description, a nerve-wracking business. Nvidia has to make massive bets – locking in chip designs years in advance – for AI workloads that don’t fully exist yet.

“We’re always on the brink of disaster,” he said, with a kind of cheerful honesty that you don’t usually get from a VP at one of the world’s most valuable companies. “I don’t want to minimize the challenge.”

But he wasn’t being pessimistic. His point was that Nvidia’s entire operation is built around conviction – the willingness to put all the chips (literally) on one bet and see it through. There’s no hedging, no portfolio approach, no safety net. It either works or it doesn’t.

“We’re gonna put all of our eggs in one basket,” he said, “and it either is gonna go great, or it’s gonna go terrible. And we’re voting for great.”

Given that Nvidia’s stock has been one of the defining stories of the past two years, “voting for great” appears to be working out.

The part of AI nobody talks about: making it actually run

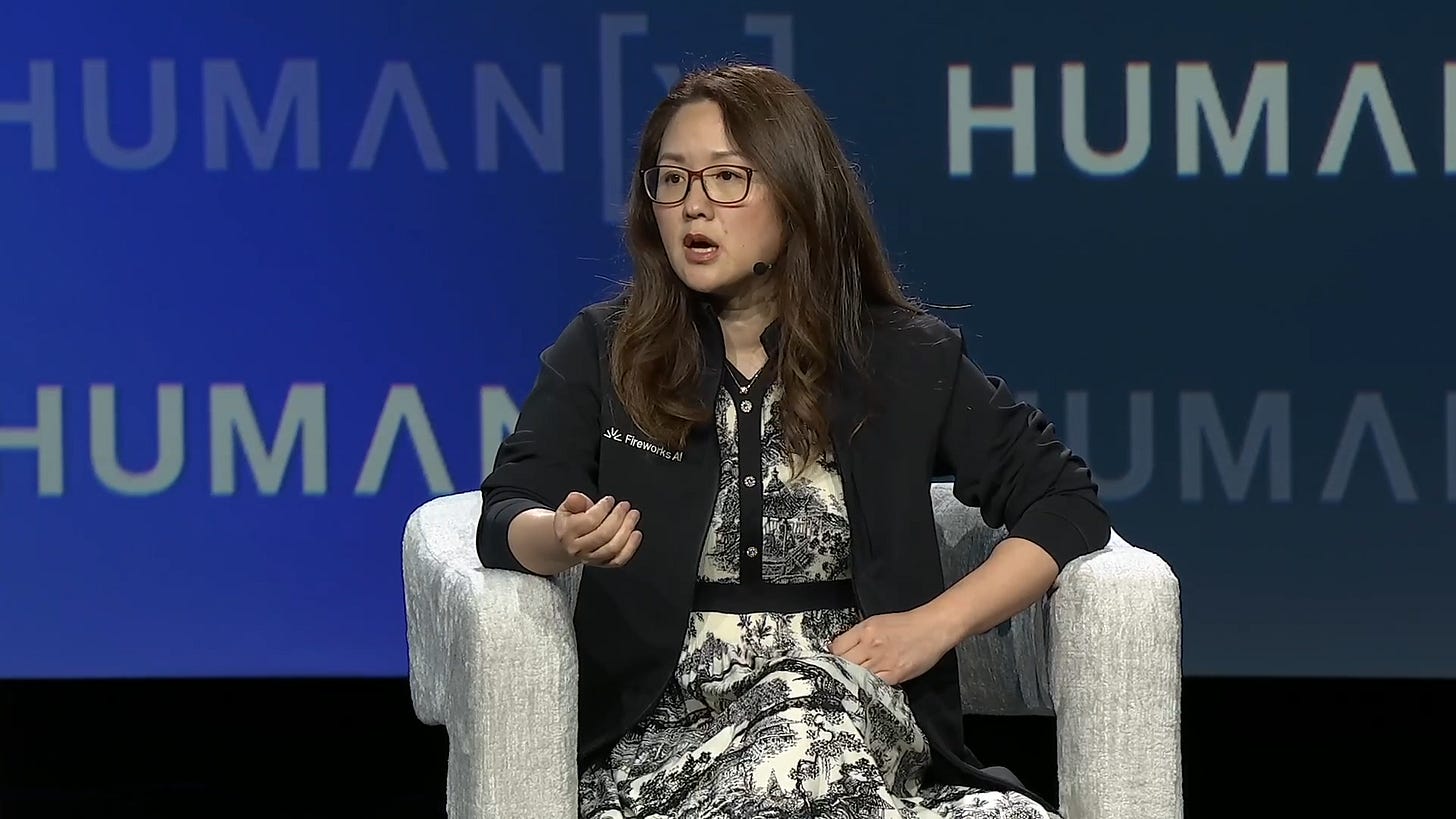

While Catanzaro was speaking from the chip side of the equation, Lin Qiao – co-founder and CEO of Fireworks AI, a company that specializes in deploying AI models at scale – offered a view from the other end of the pipeline.

Her argument was simple: building a great AI model is only half the battle. Making it fast, affordable, and reliable enough for real-world use is its own enormous challenge – and one that tends to get less glamorous coverage than the model launches themselves.

“Pre-product market fit, quality, quality, quality,” she said. “Post-product market fit is efficiency, efficiency, cost, cost, cost.”

She put a sharper point on it with a warning that’s already playing out at some companies: “There are companies that are just going to scale into bankruptcy.” The idea being that an AI feature that’s expensive to run in small tests becomes ruinously expensive when millions of people start using it – and a lot of companies haven’t done that math yet.

Qiao also made a broader point about where AI’s real untapped value lies. It’s not in the public internet data that trains the big foundation models. It’s in the private data locked inside businesses, hospitals, law firms, and yes, your own files. That data, she argued, is where the next wave of genuinely useful, personalized AI will come from – and it’s barely been touched.

Build for where AI will be, not where it is

Denis Yarats, co-founder and CTO of Perplexity – the AI-powered search engine that’s been quietly winning over users who find ChatGPT too open-ended – brought the discussion back down to earth with a piece of advice aimed squarely at anyone building products right now.

The most common mistake he sees? Designing for today’s AI instead of tomorrow’s.

“You have to build for what it is going to be like six or 12 months ahead, never what is now,” Yarats said. “Because if you do that, you’re always going to be behind.”

It’s the kind of thing that sounds obvious until you realize how few companies actually do it. The AI landscape has shifted so dramatically and so quickly that products built around the limitations of 18-month-old models can feel instantly dated. The teams winning, Yarats implied, are the ones who design with the assumption that the underlying AI will keep getting better – and build accordingly.

HumanX was at capacity, but AI’s ceiling isn’t

It was standing-room-only in both the main event hall and the spillover room for this HumanX talk. The picture that emerged from Monday’s stage wasn’t one of an industry hitting a ceiling. It was an industry that’s still in the early, steep part of the curve – working through constraints of power, cost, and deployment speed, but genuinely optimistic about where it’s headed.

Qiao put it most plainly. Token costs – essentially, the price you pay every time an AI thinks – will drop dramatically as the stack matures. AI is already reaching beyond software engineers, she noted. Her head of finance uses it for forecasting. Her legal team built tools with it. She mentioned an Uber driver she’d spoken to who was using AI to write music.

“It is everywhere,” she said. “It’s very accessible.”

The CEO of your own AI company is still a few years out. But if Monday night was any indication, the people building the engine are already well past the drawing board.